Does AI in Recruiting Improve Hiring Results? What the Data Says’

Quick answer: Data shows that AI in recruiting can improve hiring outcomes on specific metrics. The strongest results appear when AI improves structure and consistency while humans retain final decision authority.

Hiring teams are under pressure from every direction. You are expected to move faster, compete for scarce technical skills, reduce bias, and justify hiring decisions with data. In that environment, AI in recruiting has become a serious consideration rather than an experiment.

A widely cited study found a 12% lift in offer rates and 17% higher retention when AI-supported screening was used. Still, many leaders are asking whether it’s reliable.

Can it improve the hiring process without creating new risks? Can it help your team make better decisions, or just quicker ones?

The data suggests a measured answer. AI performs well when it enforces structure and consistency early in the process. But human judgment is still important where context, motivation, and team dynamics matter most.

What the Data Actually Tells Us

The data shows that AI in recruiting can improve hiring outcomes on specific metrics. The strongest results appear when AI improves structure and consistency while humans retain final decision authority.

An offer-rate lift doesn’t mean AI is “better” at judgment. It means AI is better at enforcing structure. Offer rate measures how many screened candidates ultimately receive offers. A 12% lift indicates fewer qualified candidates are filtered out too early.

In practice, AI interviews standardize how companies evaluate candidates, whether through internal teams or outsourced IT staffing agencies. Each applicant receives the same questions, scored against the same criteria.

This reduces the inconsistency that often creeps into manual screening due to time pressure, interviewer fatigue, or subjective impressions.

In high-volume technology hiring, standardizing evaluation is especially important. When recruiters need to screen dozens or hundreds of applicants, unstructured interviews tend to reward confidence and familiarity rather than role-relevant capability.

AI-supported screening narrows the pool based on defined requirements before humans invest deeper time.

The biggest value comes from decision confidence. Teams can trust that shortlisted candidates meet baseline qualifications, allowing human interviewers to focus on deeper evaluation.

Standardized inputs matter more than advanced algorithms. Even basic AI outperforms unstructured interviews when criteria are clear.

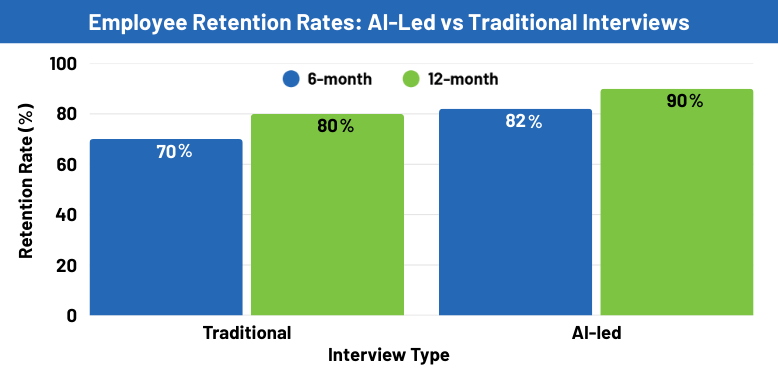

Why AI-Led Interviews Correlate With Higher Retention

Retention outcomes tell a more important story than offer rates. The reported improvement in retention suggests better alignment between candidates and roles, not automation magic.

AI interviews can reduce false positives when companies use them early. Candidates advance because they meet defined criteria, not because they impressed a single interviewer. This lowers the risk of hiring someone who interviews well but struggles in the role.

For IT staffing, early attrition is costly. SHRM estimates replacement cost can be 50–200% of salary, depending on the role. (Technical roles are likely on the higher end.) Retention is a cost and risk metric, not just an HR outcome.

Research also shows that retention gains are strongest when AI is used before final interviews. Once humans take over for contextual evaluation, results stabilize rather than decline. This reinforces the importance of role clarity and shared evaluation standards.

Where AI Performs Well and Where It Falls Short

AI is effective when tasks benefit from scale and consistency. It struggles when nuance and context dominate. Understanding this distinction is essential for modern technology hiring.

AI performs well in:

- Resume screening at scale. Pattern recognition reduces manual bottlenecks.

- Structured interview scoring. Consistent criteria improve comparability.

- Trend analysis across large pools. Data reveals correlations humans often miss.

AI falls short in:

- Contextual nuance. Non-linear career paths confuse models.

- Motivation and intent. These require conversation and judgment.

- Team dynamics and collaboration style. Culture cannot be inferred from data alone.

Effective teams design the hiring process so AI supports repeatable tasks while humans own judgment-heavy decisions. Intentional role separation prevents over-automation and preserves accountability.

Fairness, Bias, and the Risk of Over-automation

AI reflects the data it is trained on. If historical hiring patterns favored certain backgrounds, AI may replicate those patterns unless corrected. This is an operational risk, not a theoretical one.

Bias enters through training data, scoring criteria, and thresholds. Without transparency, teams may struggle to explain decisions. This creates risk in regulated industries and erodes candidate trust.

Human checkpoints mitigate this risk. Regular audits, documented criteria, and outcome monitoring help teams identify drift early. Fairness becomes manageable when treated as a system responsibility rather than a moral abstraction.

Research consistently shows that ongoing audits outperform one-time reviews. Continuous monitoring allows teams to correct bias before it affects outcomes.

What This Means for Technology Teams

In regulated and high-skill environments, explainability matters as much as efficiency. Technology hiring and BFSI roles require clear decision trails and candidate trust.

AI adds value by:

- Standardizing early screening for compliance.

- Improving candidate experience through consistency.

- Reducing time spent on low-signal evaluations.

Likewise, human oversight ensures:

- Contextual judgment remains central.

- Candidates receive meaningful feedback.

- Teams stay accountable for outcomes.

An optimized hiring process blends automation with checkpoints that protect quality and fairness. This balance supports scalability without sacrificing trust.

Additional Research Findings

Recent hiring research points to a simple truth: structured interviews work better.

Studies published over the past few years show that structured, data-driven interviews consistently outperform informal interviews at predicting job performance and retention.

When candidates are asked the same questions and evaluated against clear criteria, hiring decisions become more consistent and defensible.

This advantage has nothing to do with whether a human or an AI runs the interview. The structure is what matters. Research from journals like the Journal of Applied Psychology and Personnel Psychology confirms that structure reduces bias, improves role alignment, and sharpens early evaluation.

The takeaway is straightforward. Better hiring comes from better structure, not from removing humans or adding automation for its own sake.

Recent studies in organizational psychology and talent assessment show that structured interviews produce more reliable hiring decisions because they reduce variability in how candidates are evaluated.

When interviewers ask the same questions, score responses against defined criteria, and document decisions, hiring outcomes become more consistent and defensible. This holds true whether humans or AI deliver the structure.

Research published between 2022 and 2024 reaffirm that structured interviews are significantly better predictors of job performance and tenure than informal or conversational interviews.

Researchers attribute this advantage to reduced cognitive bias, clearer role alignment, and improved signal-to-noise ratio during early evaluation.

Importantly, these studies don’t argue for removing humans from the process. Instead, they show that the structure itself drives better outcomes, not the delivery mechanism.

AI tools perform well when they enforce consistency, but similar gains appear when humans rigorously apply structured frameworks.

To learn more about AI in hiring, see our FAQ below.

Next Steps for Hiring Teams

The data doesn’t support choosing between people and AI, but it does support designing better systems.

Use AI in recruiting to improve consistency and scalability. Keep humans accountable for final decisions. Measure success through retention and performance, not speed alone.

If your team is evaluating how AI fits into IT staffing or regulated environments, GDH helps organizations design hiring processes that balance structure, judgment, and accountability. Learn more about GDH’s approach to IT staffing staffing to see how intentional process design supports better outcomes.

FAQ

Is AI biased in hiring?

AI can reflect bias if it learns from historically skewed data. Bias risk drops when teams use clear criteria, audit models regularly, and keep humans accountable. Fairness comes from oversight and process discipline, not automation alone.

Do AI interviews replace human interviews?

No. High-performing teams use AI for early screening and consistency, then rely on humans for final interviews, judgment, and team fit. The best results come from structured AI support paired with human decisions.

Is AI suitable for senior IT roles? AI helps with early screening and skills validation, but senior IT roles require judgment, leadership evaluation, and context that AI cannot assess well. It should support, not drive, final decisions.

How do candidates feel about AI interviews?

Candidate response is generally neutral to positive when AI is used transparently and as part of a human-led process. Trust declines when decisions feel opaque or human interaction disappears.